Self-Regulation in AI: Are Companies Taking the Lead?

Are AI companies making sure their products are safe?

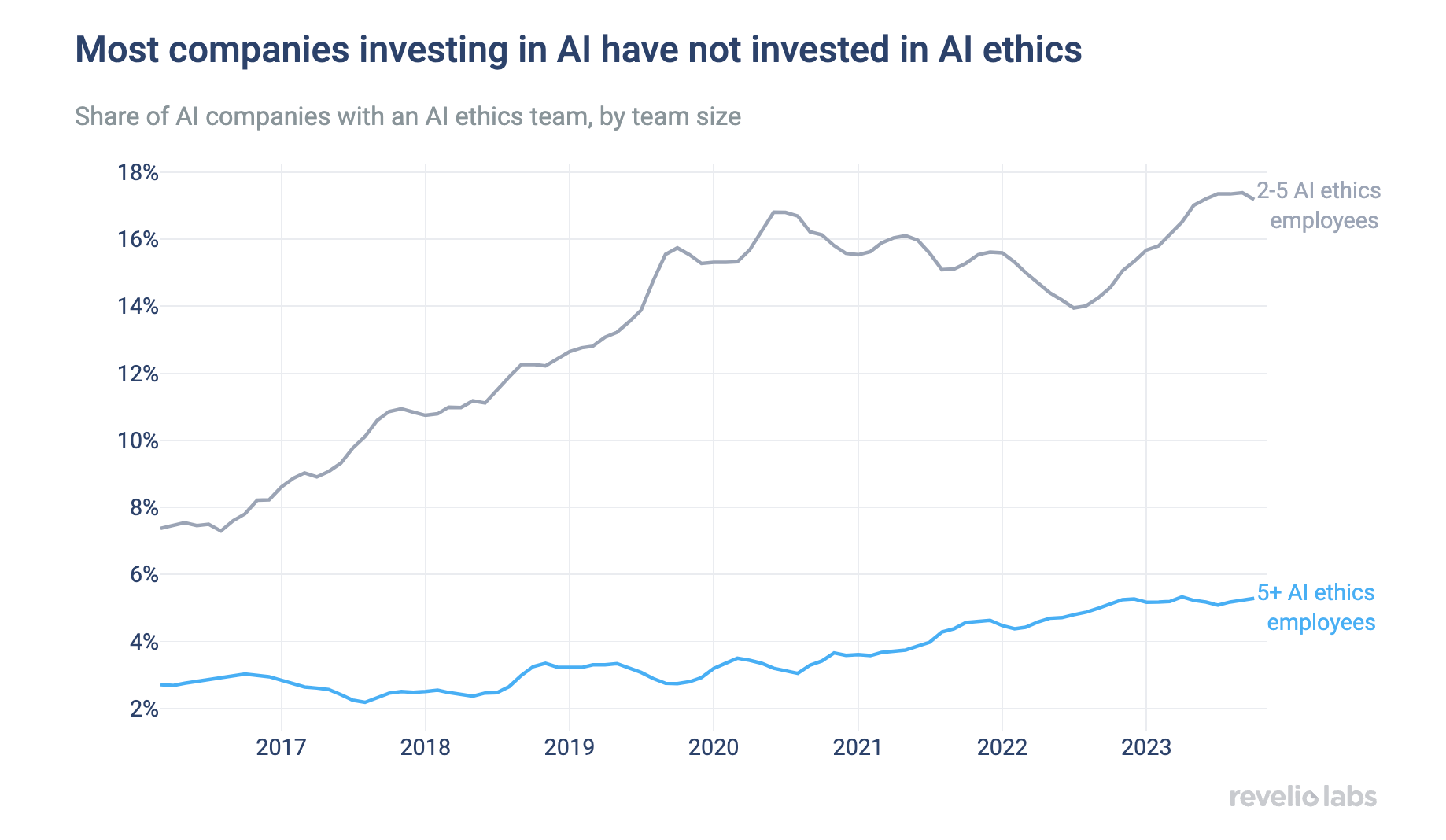

In a world experiencing an AI revolution, it's astonishing that fewer than 20% of AI-invested companies have dedicated AI ethics teams.

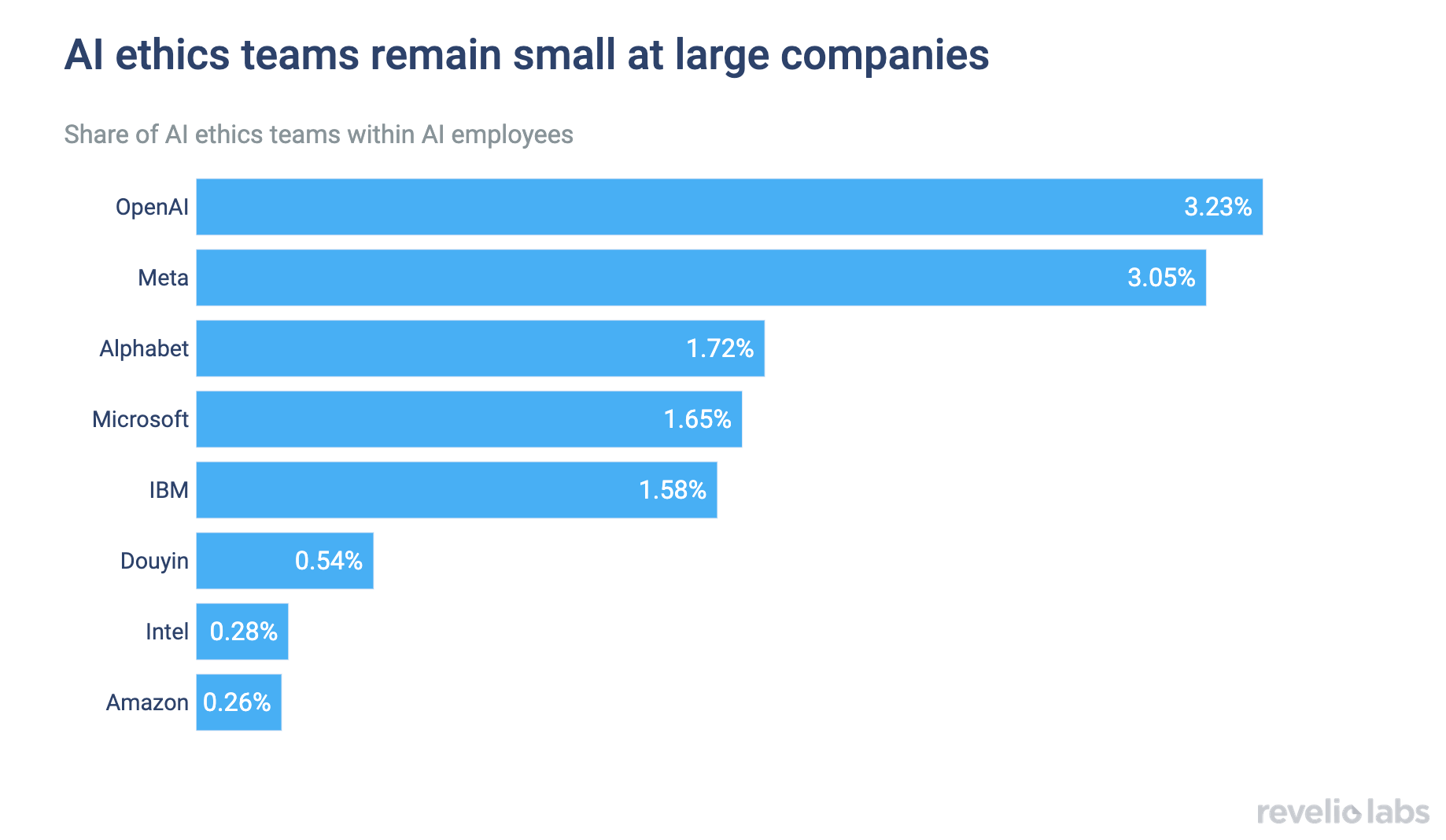

Even in AI giants such as OpenAI, Microsoft, and Meta, there are fewer than three AI ethics professionals for every 100 AI employees.

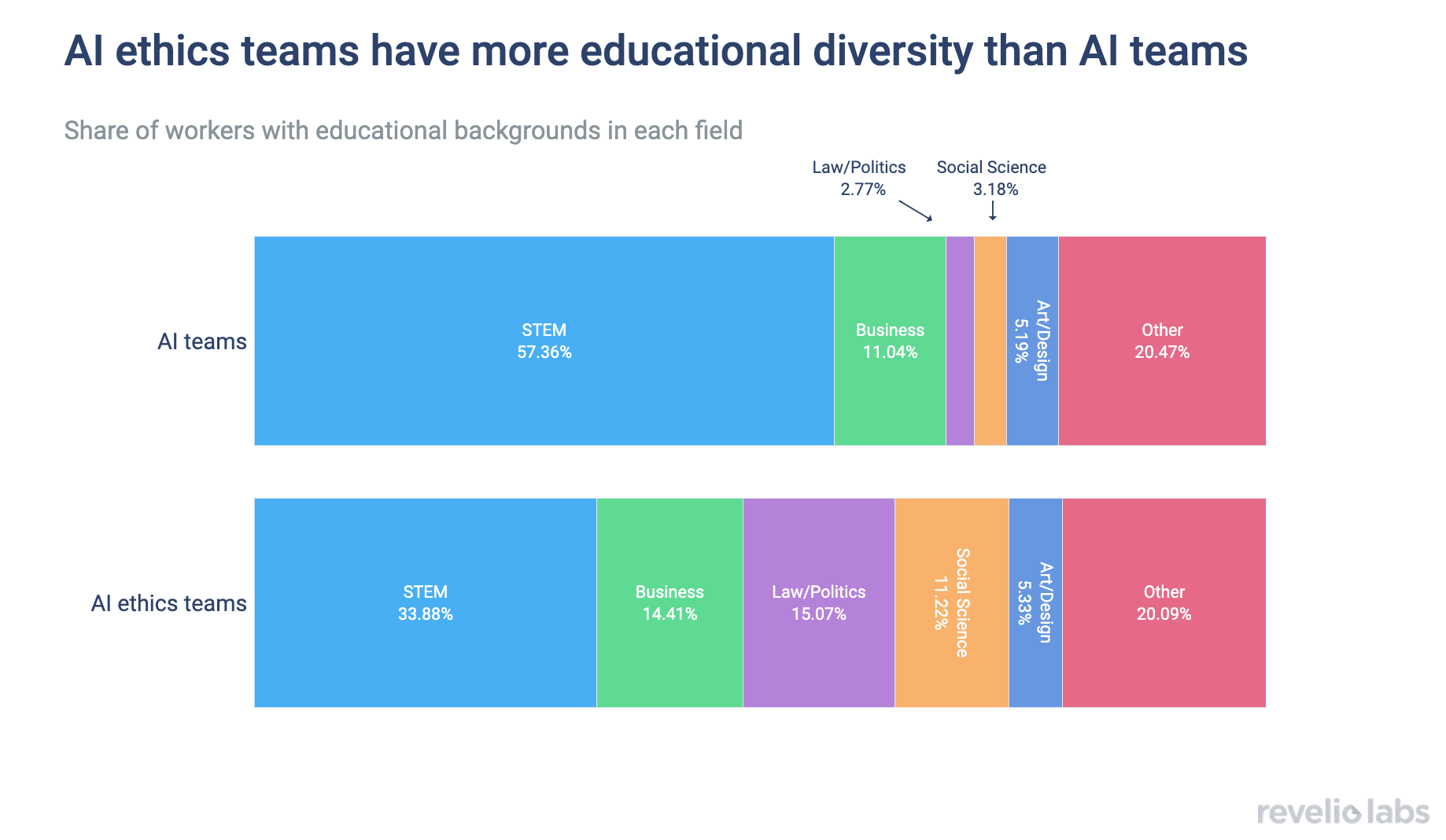

One thing the companies got right so far has been recruiting professionals from diverse backgrounds to address the multifaceted challenges posed by AI, spanning policy trade-offs, legal frameworks, and broader ethical considerations.

AI is rapidly transforming our daily lives, and its integration into decision-making processes across various industries is becoming increasingly widespread. AI now impacts decision processes ranging from workforce planning to evaluating loan applications. However, it's important to be cautious about the biases that these tools might have. AI algorithms are only as good as the data they are trained on, and if that data contains biases, the algorithm will learn and perpetuate them. This week, Revelio Labs is focusing on the role of AI ethics and assessing whether companies are prioritizing responsible AI practices or if market capture has taken precedence over ethical considerations.

In a world experiencing an AI revolution, it's surprising that fewer than 20% of AI-invested companies have dedicated AI ethics teams. What's even more concerning is that, even when these teams do exist, they often comprise just a handful of employees tasked with ensuring responsible AI practices. As of 2023, only less than 5% of companies with large AI teams have more than 5 AI ethics experts.

Sign up for our newsletter

Our weekly data driven newsletter provides in-depth analysis of workforce trends and news, delivered straight to your inbox!

But how about the major companies at the forefront of the AI revolution? One would expect them to take greater care in their workforce planning to ensure responsible AI practices are implemented. However, despite the increased push on AI ethics from policymakers, they are also slacking in their investment in ethical AI. The plot below illustrates the share of AI employees who are focused on AI ethics and policy. OpenAI leads the pack; still, only 3.23% of its AI employees are in AI ethics positions. The share of AI ethics employees is even lower across other major players in the space, with fewer than three such employees per hundred AI employees.

Despite concerns about the prevalence and size of AI ethics teams, Revelio Labs finds some positive signs in their makeup. Beyond sheer numbers, AI ethics also requires an interdisciplinary approach encompassing policy trade-offs, legal frameworks, and broader ethical considerations. Indeed, companies fare much better in this regard. AI ethics teams exhibit a greater diversity of backgrounds compared to general AI teams, placing a stronger emphasis on social sciences, law, and politics. The diversity of perspectives within these teams makes them well-equipped to identify and address potential biases or unintended consequences stemming from AI use—now AI companies need only to scale up!